4. Reduction

Epistemic Reduction is a process that discovers higher level abstractions in lower level data by discarding everything at the lower layer that it recognizes as irrelevant

We have seen the power of Models. We have introduced the two problem solving meta-strategies of Reductionism and Holism. We also noted that the

creation and use of Models requires an Intelligent agent that Understands the problem domain — someone or something has to perform the Reduction. I will now discuss Reduction in some detail.

Until 2012, only humans and other animals with brains could perform Reduction. Now our Deep Neural Networks (DNN) can perform limited Reduction. How do brains and DNNs accomplish this, and how can we improve these algorithms?

This may be, to some readers, the most rewarding blog in this series because it provides you the opportunity to learn a new and useful skill. Most people never think about the world at this level. Knowledge of Reduction provides a new point of view that you can use to better understand your environment, other intelligent agents around you, and modern AI systems.

Definition of Reduction

Reduction is a process that discovers higher level abstractions in lower level data

We will initially note that Reduction is exactly the same as Abstraction. Why do we need a new word? Because the term “Abstraction” is mostly used by scientists already operating in a pure Model space, seeking a higher level of abstraction in that space. But to them, “Abstraction” is something that just magically happens in their heads, since there are no scientific theories for how Abstraction works. There cannot be, since Abstraction is a concept in Epistemology, not Science.

AI researchers are starting from something much closer to our rich mundane reality, where there is a lot of confounding context. We are solving the meta-problem of how to move from there into a space that is sufficiently abstract to solve the problem at hand. Here, Reduction is a much more appropriate term. We can’t abstract a red pixel or the letter “b”, but we can Reduce a rich context containing that pixel or letter into a higher level concept.

We Are Swimming In Reduction

Paradoxically, one of the hardest thing about teaching Reduction is that we don’t see the need to learn about it because we all do it all the time, every millisecond, and the resulting Reductions (Models) become available to our conscious minds as if “by magic”. Brains Reduce away 99.999% percent of their sensory input, but this process is subconscious and hence invisible to us. The situation is much like (supposedly) a fish swimming in water. We are all masters of Reduction, but we don’t know how we do it or that we even do it. We didn’t know this would ever matter. And generally, it doesn’t.

Well, it matters in Epistemology, and it matters in AI, since we need to actually implement that “magic”. We as Epistemologists must know how Abstraction is actually performed. And we give the Epistemology level equivalent of Abstraction the name “Reduction” because that’s the recipe for how to accomplish it. We Reduce our rich mundane Reality by discarding (reducing away) what’s irrelevant. And by using the name “Reduction” we (as AI Epistemologists) keep reminding ourselves how it is properly done.

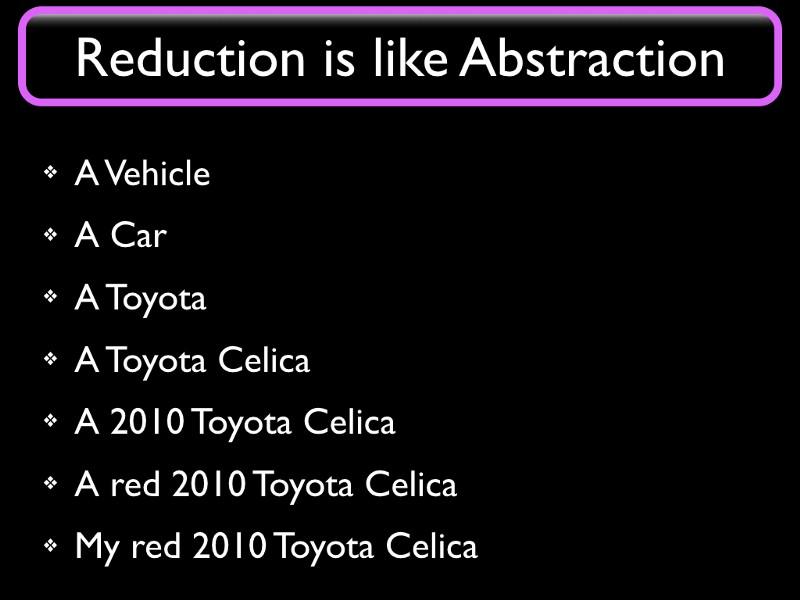

Consider the following descriptions of a car. The slide is meant to be read from the bottom up, to match “abstraction levels” from low to high.

If I’m driving to work, I better be driving my car. If the police is looking for a stolen car they would be looking for a Red 2010 Toyota Celica. If I’m buying a new car then I might be looking for just a new Toyota Celica. And a self-driving car would likely only need to Understand whether an obstacle is a vehicle or not, in order to Model maximum speed for future movement.

We see that we want to pick the appropriate level of abstraction to deal with the same object (or topic) in different situations. But more importantly, we see that we can get from a more detailed description (at the bottom) to a more generic one (higher up) by simply discarding some detail.

I hasten to point out that Reduction is more complicated than this simple example of decreasing specificity shows, but we need to start somewhere and this image allows us to form intuitions that will serve for a while. True Reduction involves operations like shifting from syntax to semantics or from instance to type; the appearance of “car” as an abstraction of “Toyota”, and the step from “My Toyota” to “A Toyota” illustrate these steps. Algorithms for these things are known.

Salience

Part of the trick is to know what to discard. At each level of abstraction, something can typically be identified as the least important property. “Red” and “Celica” are more significant than “2010” for anyone looking for a car. If we had started from “My red 2010 Toyota truck”, then the word “truck” would not be discarded until the top level. Reduction requires Understanding what’s relevant; in Reduction we “keep that which is Salient”. More later.

Partial Reductions

Most of the time we do NOT perform Reduction all the way to Models. I cannot stress this enough. We discuss Reduction to Models for pedagogical reasons; it is easy to initially see the context-free Model as the goal of Reduction. In reality, in brains, we can stop Reducing the moment we recognize that we have a working answer or response, such as a command to contract some muscle or having Understood the meaning of a sentence subconsciously. At this point, there is still some residual context but we use that context productively rather than discard it to move to higher levels.

Some people claim we use Models for all our thinking, but I’m using (in these blogs) “Model” only to describe a completely context free Abstraction. F=ma is an example of that. There is no need to check whether a car is a Red car or a Toyota, the equation works not only for all cars but for all forces, masses and accelerations. We might come up with a special equation for acceleration of Tesla cars, which would require different inputs like battery charge level and software settings; that would not be a context free Model since it would not work on a Toyota. For almost all tasks — basically, in everything except Science, and even there, only rarely — we only perform as much Reduction as is necessary to get the job done. When learning to ski, you only figure out how you yourself need to perform given your body and equipment; we do not need to parameterize our skiing skills for someone with twice the body mass, because that would be useless to us for the purpose of our own skiing. But a scientist would have to go that far, in order to parameterize away one more piece of context from the Model they are creating. For instance, when creating a skiing video game or designing a new ski.

If we consider the enormous amount of subconscious activity that happens in the brain we can safely say that partial Reductions are the most common Reductions. For instance, when we take a step forward, our subconscious has analyzed our posture and velocity by using Reduction based on low level nerve signals and is commanding leg muscles to contract in a precisely timed sequence. This activity is something we are unaware of; most of us don’t even know what leg muscles we have. And there would be no time to perform Reduction all the way to Models. That process takes a minimum of a half second and you don’t have that kind of time available to respond to an imbalance when walking or skiing.

Reduction in Society

Most of us get paid to Understand whatever we need to Understand in order to perform our jobs. In other words, most of us get paid to do Reduction. If you are approving building permits, you Reduce a stack of forms to a one-bit verdict of “Approved” or “Rejected”. We excel at Reduction, and this is the main reason most of us haven’t been replaced by robots. But we see that when future Understanding Machines can perform Reduction by themselves, then we are unlikely to get paid for it.

Levels of Reduction

Suppose a young man and a young woman fall in love, something happens to mess it all up, and then they sort this out and re-unite.

This is what happened in the man’s “rich mundane reality”. Suppose the man wants to share this experience, because there was some moral to the story that he thinks would be interesting to others and possibly important.

He could analyze what happened and figure out which were the key events in the saga and then have actors on a stage re-enact the story as a play. This is a Reduction because the boring parts of the story would not be part of the play. They are discarded as irrelevant. But the story would be acted out by real people in front of a live audience. If you are in the audience, you can move your head to see behind any actor on the stage and you can clearly see everything on the stage, not just one actor speaking at a time.

He could make a movie about it. Now your point of view is predefined by the camera angle and cropping. You can no longer see behind an actor, and you can often only see those actors that are involved in the main action.

He could write a book about it. We no longer can see even the people described in the book, except in our imagination.

An critic reviewing the theatre play may Reduce it to “Boy meets girl, boy loses girl, boy gets girl”

A drama school graduate may summarise it as “A double-reversal plot”. This is a description that is so free from context (doesn’t even specify boys or girls) that it could be argued it qualifies to be called “A Model”.

Plays, Movies, Books, Stories, Tropes, etc are all Partial Reductions of Reality, and some are more Reduced than others. Just like in the My Red Toyota case, we need to find the appropriate level of Abstraction to work with.

The young man in the example, when writing a book or a screenplay, has much in common with a Scientist trying to describe something in nature in a re-usable context free manner by Reducing it to a Model. They are Model Makers, or are at least performing partial Reduction. They are discarding the irrelevant bits.

The Opposite of Reduction

We also need to be able to move in the opposite direction, from Models to Reality. Or at least from more abstract Partial Models to Partial Models closer to reality.

When an actor is given a screenplay they know it only contains rough directions for what to do and what lines to say. The actor’s job is to “give a little of themselves” to flesh out the screenplay to actual actions, including creating — synthesizing — the appropriate display of emotions, tone of voice, and body language. They use their experience as people and as actors ; they use elements of their past lives and skills they have acquired by training to create something people in the audience might relate to. For example, they may re-purpose a personal experience (“be as sad as when my hamster died”), things they learned in drama school (speaking, singing, dancing, swordplay), from other actors (“What would Bogart do”), from fiction, from other movies and plays, etc.

The actor’s art is to convey whatever the script intends to convey — emotions, a morality cookie, a political position, titillation, surprise, etc. starting from a simple Model (the screenplay). Their job is similar to an engineer’s when they are faced with a problem and use a Model to solve it. The engineer would use their experience to decide that “m” is the mass of the car and not the tire pressure. The actor decides that “sadness” is more appropriate than “grief” for a certain scene, etc.

I call this process, which is “the opposite of Reduction”, by the name it is used in problem solving: “Application”. We use a Model to simplify a problem situation, moving it into an abstract and purer “Model space”. We solve the problem there (by performing math, perhaps) and then “Apply” the answer to our rich reality, to the problem we are trying to solve.

Many of you may recognize the word “Application” or its abbreviation “App”. That’s not as farfetched as it might seem; apps are software based Models.

Reduction and Application in Brains

Back to the issue of Partial Reductions. Consider the actor reading a screenplay. They are using their eyes to gather “pixels” of color and orientation. The brain then performs pattern matching — Reduction — from these low level signals to letters, words, to language, to higher level concepts like love and separation, and eventually to a high level Understanding of the playwright’s intents. The actor then takes this high level Understanding and by performing Application he adds his own Experience to the script, to get closer to reality in their performance.

Our brains are capable of moving up and down many levels of abstraction at once. Perhaps it tracks all of them simultaneously, keeping layers of abstraction separate. This is a clue for why Deep Neural Networks perform better than shallow ones. Which is what we’ll discuss next.